Install Avi Kubernetes Operator

Overview

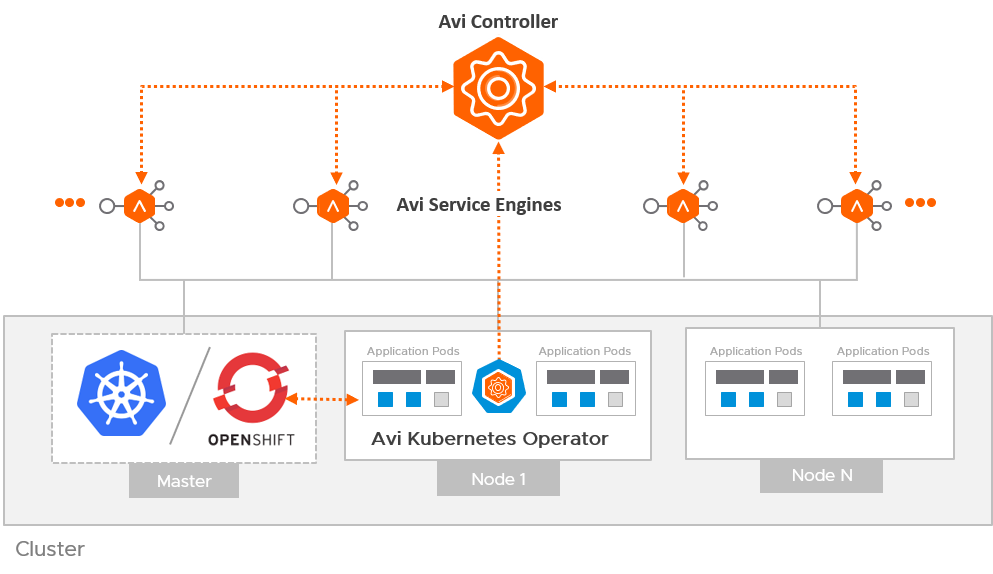

The Avi Kubernetes Operator (AKO) is an operator which works as an ingress Controller and performs Avi-specific functions in a Kubernetes/ OpenShift environment with the Avi Controller. It remains in sync with the necessary Kubernetes/ OpenShift objects and calls Avi Controller APIs to configure the virtual services.

The AKO deployment consists of the following components:

- The Avi Controller

- The Service Engines (SE)

- The Avi Kubernetes Operator (AKO)

An overview of the AKO deployment is as shown below:

Create a Cloud in Avi Vantage

The Avi infrastructure cloud will be used to place the virtual services that are created for the Kubernetes/ OpenShift application.

As a prerequisite to create the cloud, it is recommended to have IPAM and DNS profiles configured.

Configure IPAM and DNS Profile

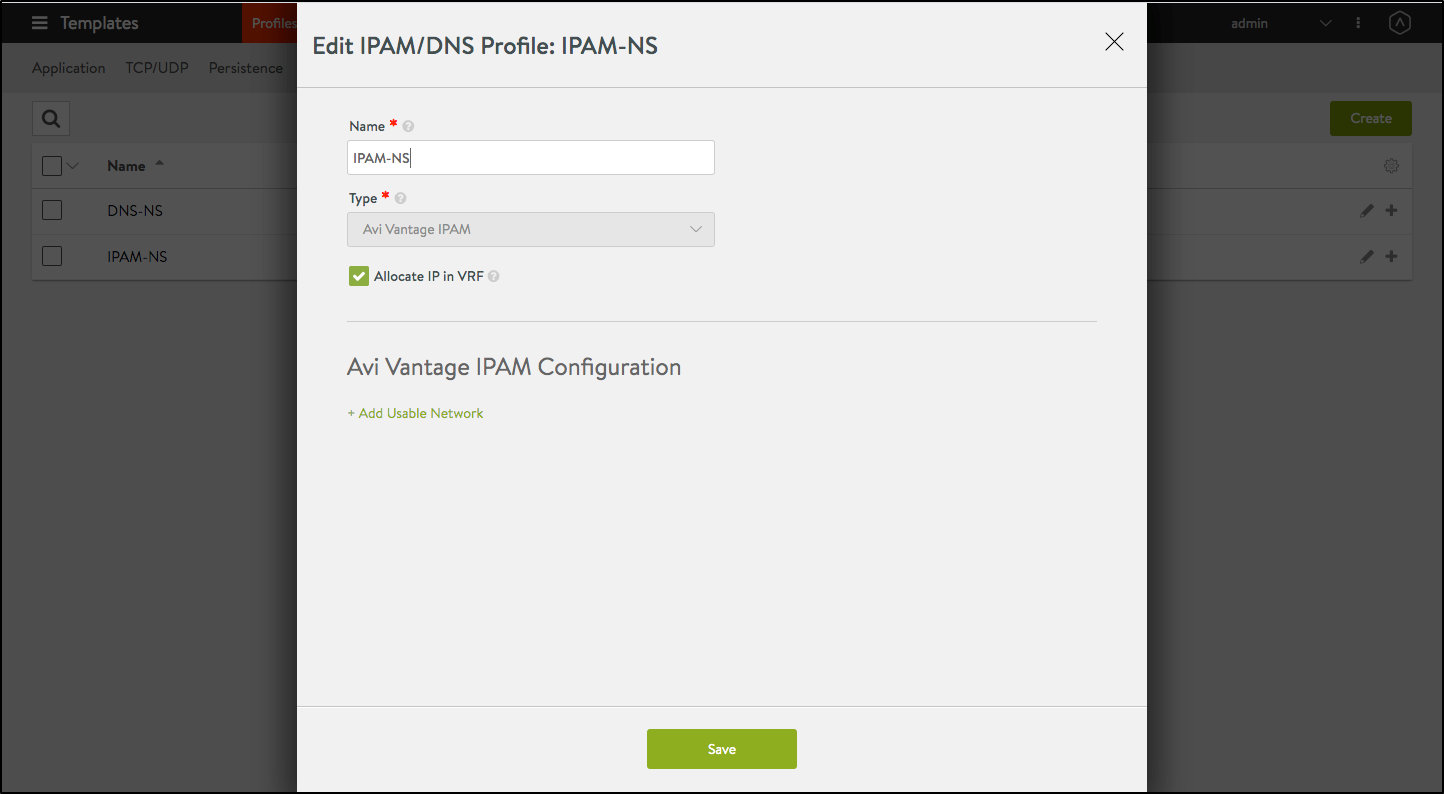

Configure the IPAM profile and select the underlying network and the DNS profile which will be used for ingresses and external services.

To configure the IPAM Profile,

- Navigate to Templates > Profiles > IPAM/DNS.

-

Edit the IPAM profile as shown below:

Note: Usable network for the virtual services created by the AKO instance must be provided using the fields

vipNetworkList|subnetIP|subnetPrefixfields during helm installation. - Click on Save.

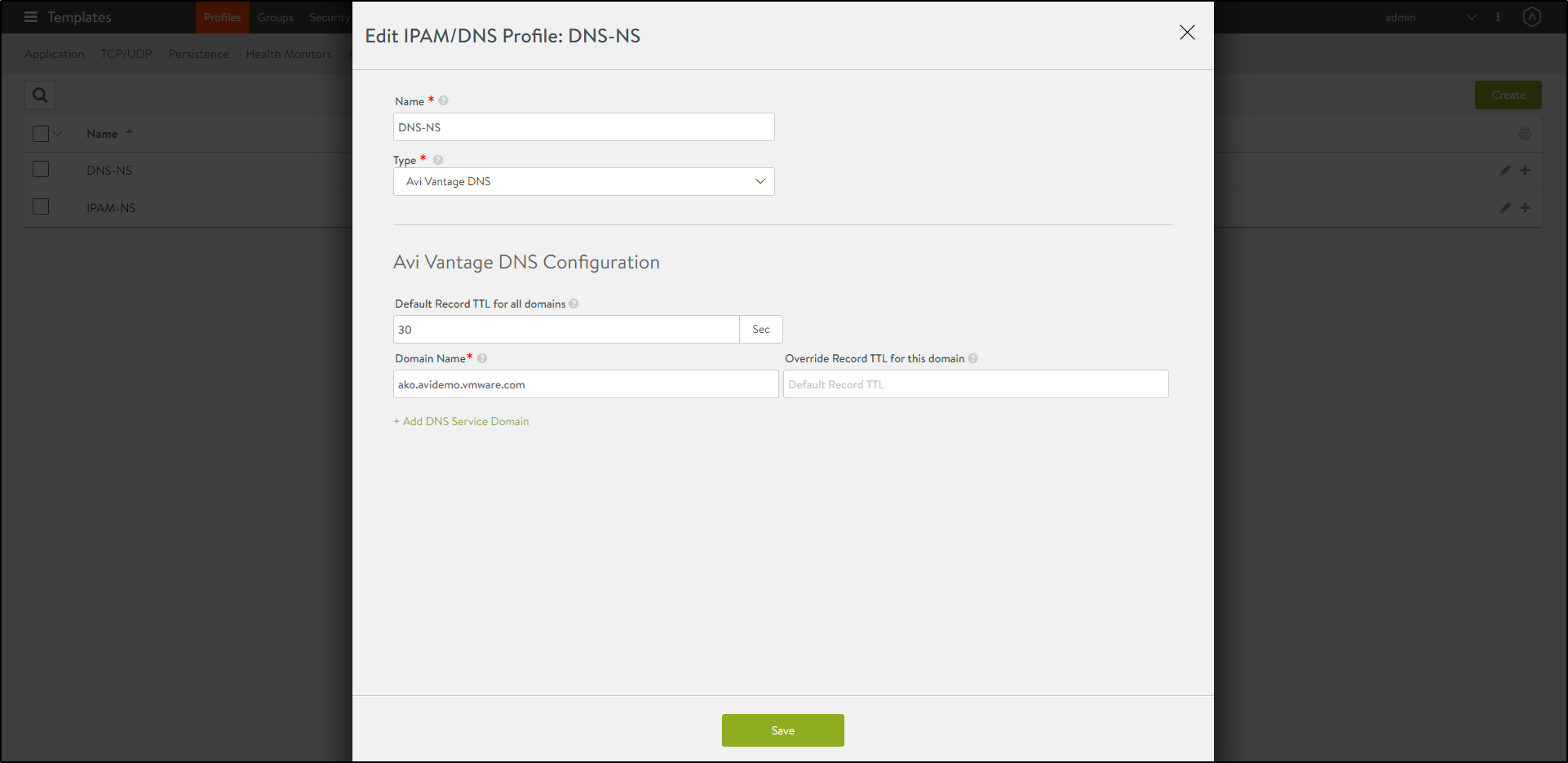

To configure the DNS Profile,

- Navigate to Templates > Profiles > IPAM/DNS.

-

Configure the DNS profile with the Domain Name.

- Click on Save.

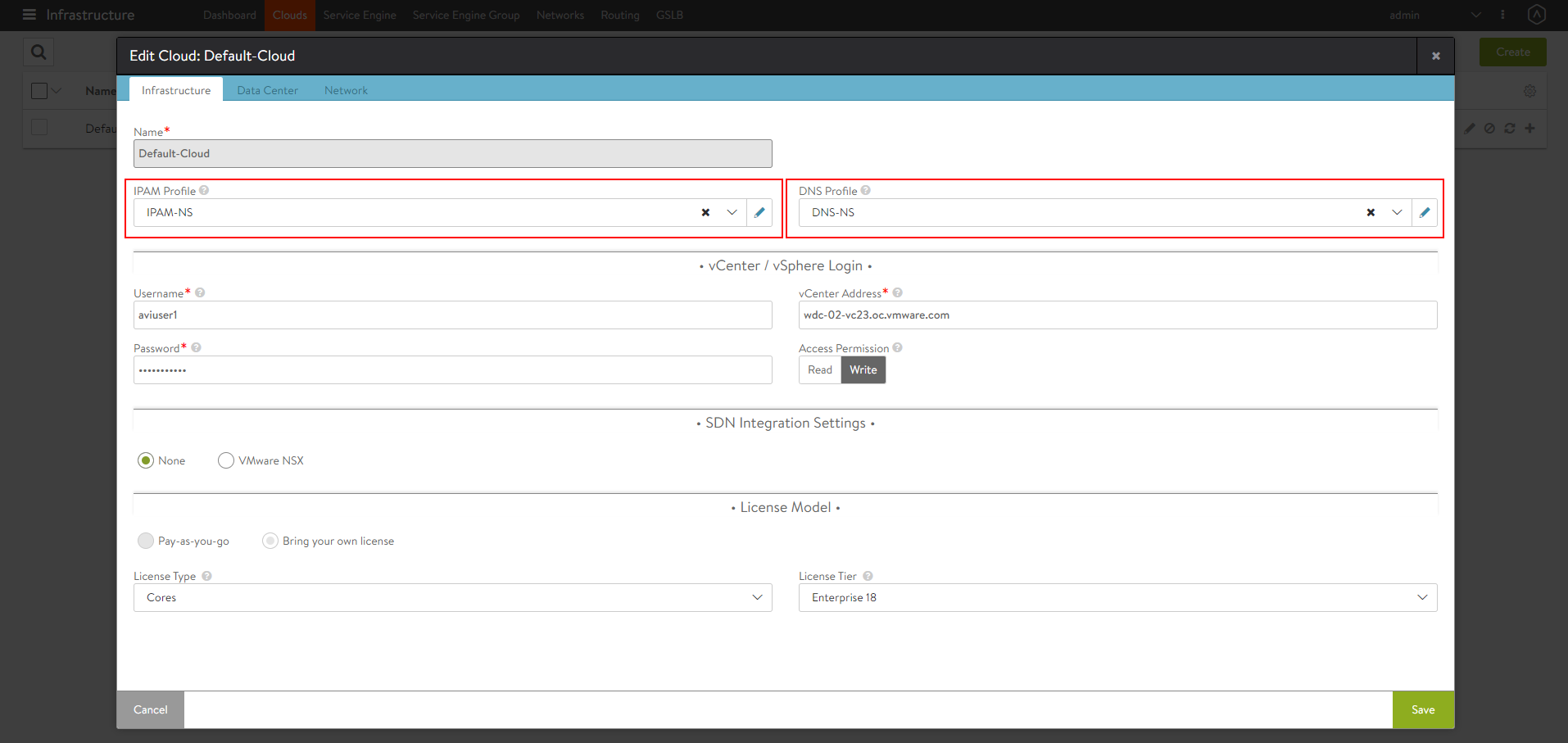

Configure the Cloud

- Navigate to Infrastructure > Clouds.

- Select the vCenter cloud and click on the edit icon.

-

Under the Infrastructure tab, select the IPAM and DNS profiles created for the north-south apps as shown below:

-

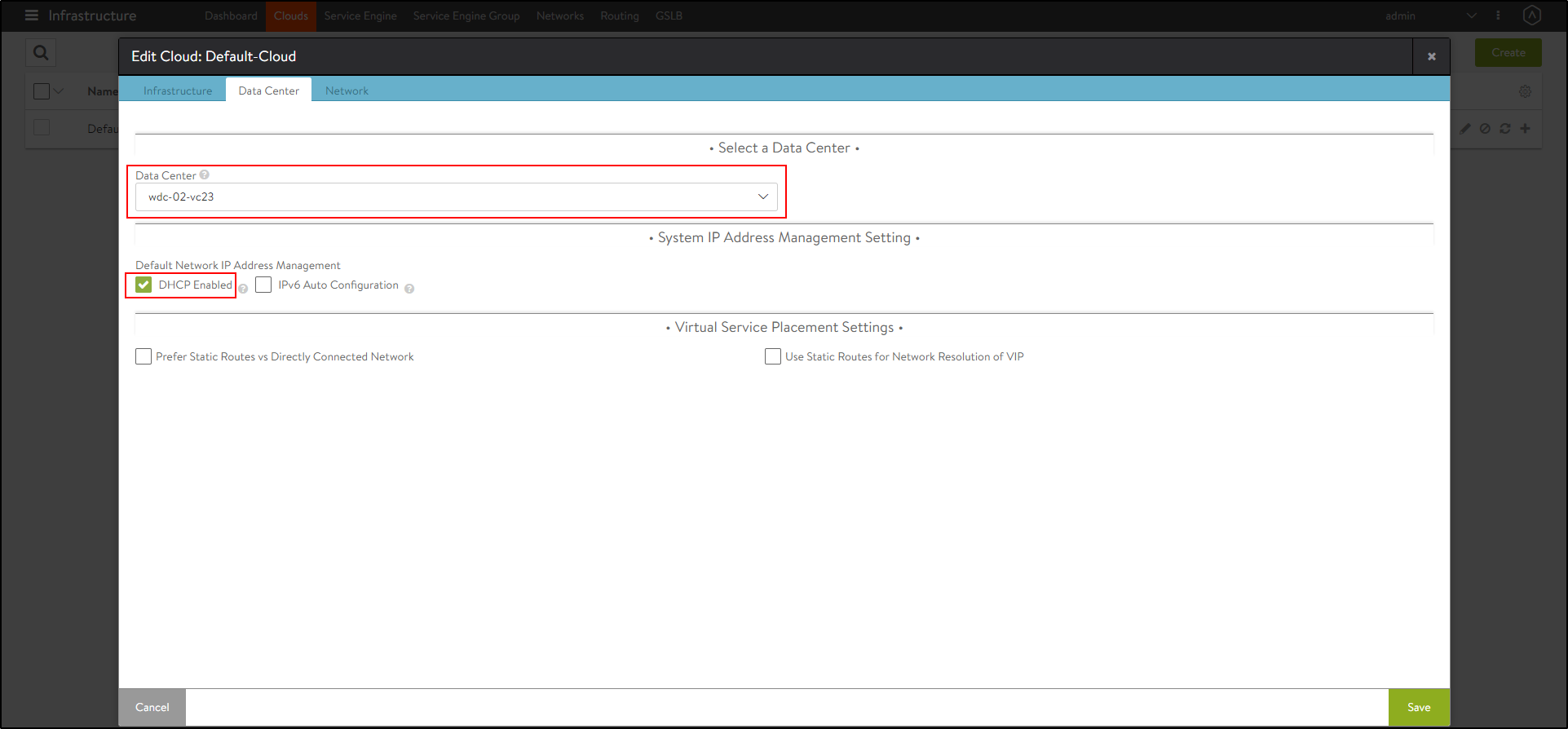

Under the Data Center tab, select the Data Center and enable DHCP as the IP address management scheme.

-

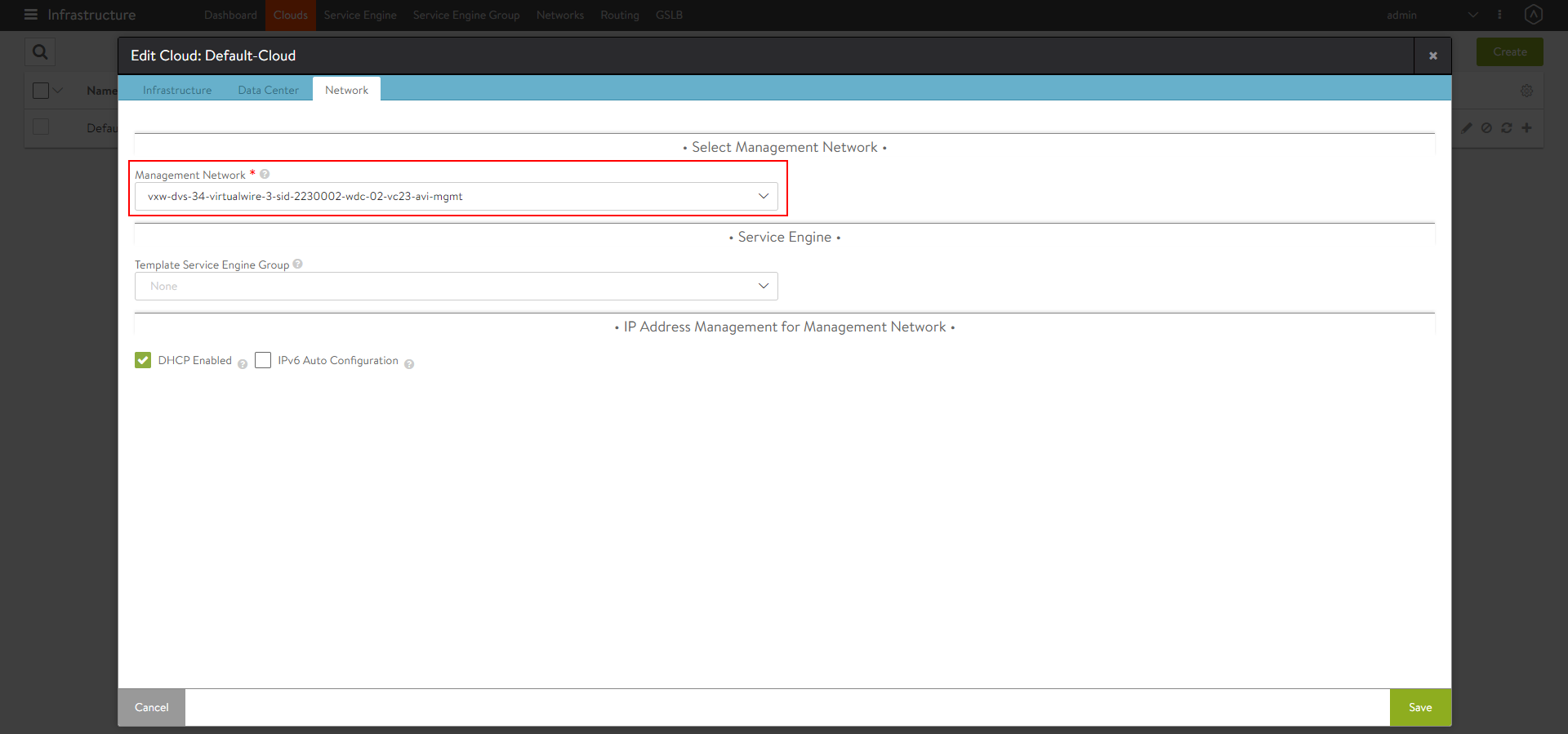

Under the Network tab, select the Management Network.

- Click on Save.

AKO in NSX-T deployments

Starting with AKO version 1.5.1, AKO supports the NSX-T write access cloud for both NCP and non-NCP CNIs. In case of NCP CNI, the pods are assumed to be routable from the SE’s backend data network segments. Due to this, AKO disables the static route configuration when the CNI is specified as ncp in the values.yaml. However, if non-ncp CNIs are used, AKO assumes that static routes can be configured on the the SEs to reach the pod networks. In order for this scenario to be valid, the SEs backend data network must be configured on the same logical segment on which the Kubernetes/OpenShift cluster is run.

In addition to this, AKO supports both overlay as well as VLAN backed NSX-T cloud configurations. AKO automatically ascertains if a cloud is configured with overlay segments or is used with VLAN networks.

The VLAN backed NSX-T setup behaves the same as vCenter write access cloud, thus requiring no inputs from you. However, the overlay based NSX-T setups require configuring a logical segment as the backend data network and correspondingly configure the T1 router’s info during bootup of AKO via the Helm values parameter NetworkSettings.nsxtT1LR.

Configure SE Groups and Node Network List

SE Groups

AKO supports SE groups. Using SE groups, all the clusters can now share the same VRF. Each AKO instance mapped to a unique serviceEngineGroupName. This will be used to push the routes on the SE to reach the pods. Each cluster needs a dedicated SE group, which cannot be shared by any other cluster or workload.

Note If the label is already configured, ensure the cluster name matches with the value.

Pre-requisites

-

Ensure the Avi Controller is of version 18.2.10 or later.

-

Create SE groups per AKO cluster (out-of-band)

Node Network List

In a vCenter cloud, nodeNetworkList is a list of PG networks that OpenShift/Kubernetes nodes are a part of. Each node has a CIDR range allocated by Kubernetes. For each node network, the list of all CIDRs has to be mentioned in the nodeNetworkList.

For example, consider the Kubernetes nodes are a part of two PG networks - pg1-net and pg2-net.

There are two nodes which belong to pg1-net with CIDRs 10.1.1.0/24 and 10.1.2.0/24.

There are three nodes which belong to pg2-net with CIDRs 20.1.1.0/24, 20.1.2.0/24, and 20.1.3.0/24.

Then nodeNetworkList contains:

- pg1-net

- 10.1.1.0/24

- 10.1.2.0/24

- pg2-net

- 20.1.1.0/24

- 20.1.2.0/24

- 20.1.3.0/24

Note: The nodeNetworkList is only used in the ClusterIP deployment of AKO and in vCenter cloud and only when disableStaticRouteSync is set to False.

If two Kubernetes clusters have overlapping CIDRs, the SE needs to identify the right gateway for each of the overlapping CIDR groups. This is achieved by specifying the right placement network for the pools that helps the Service Engine place the pools appropriately.

Configure the fields serviceEngineGroupName and nodeNetworkList in the values.yaml file.

Install Helm CLI

Helm is an application manager for OpenShift/Kubernetes. Helm charts are helpful in configuring the application.

Refer to the Helm Installation for more information.

AKO can be installed with or without internet access on the cluster.

Install AKO for Kubernetes

- Create the

avi-systemnamespace:

kubectl create ns avi-systemNote AKO can run in namespaces other than

avi-system. The namespace in which AKO is deployed, is governed by the--namespaceflag value provided during Helm install - Add this repository to your helm CLI:

helm repo add ako https://projects.registry.vmware.com/chartrepo/akoNote: The helm charts are present in VMWare’s public harbor repository.

- Search the available charts for AKO:

helm search repo NAME CHART VERSION APP VERSION DESCRIPTION ako/ako 1.5.2 1.5.2 A helm chart for Avi Kubernetes Operator - Use the

values.yamlfrom this chart to edit values related to Avi configuration. To get the values.yaml for a release, run the following command:

helm show values ako/ako --version 1.5.2 > values.yaml -

Edit the values.yaml file and update the details according to your environment.

- Install AKO:

helm install ako/ako --generate-name --version 1.5.2 -f /path/to/values.yaml --set ControllerSettings.controllerHost=<controller IP or Hostname>--set avicredentials.username=<avi-ctrl-username> --set avicredentials.password=<avi-ctrl-password> --namespace=avi-system - Verify the installation:

helm list -n avi-system NAME NAMESPACE ako-1593523840 avi-system

AKO in OpenShift Cluster

AKO can be used in the in an OpenShift cluster to configure routes and services of type Loadbalancer.

Pre-requisites for Using AKO in OpenShift Cluster

-

Ensure the OpenShift version is 4.4 or higher to perform a Helm-based AKO installation.

Note: For OpenShift 4.x releases prior to 4.4 that do not have Helm, AKO needs to be either installed manually or Helm 3 needs to be manually deployed in the OpenShift cluster.Ingresses, if created in the OpenShift cluster will not be handled by AKO.

Install AKO for OpenShift

- Create the

avi-systemnamespace.oc new-project avi-system - Add the AKO repository

helm repo add ako https://projects.registry.vmware.com/chartrepo/ako - Search for available charts

helm search repo NAME CHART VERSION APP VERSION DESCRIPTION ako/ako 1.5.2 1.5.2 A helm chart for Avi Kubernetes Operator - Edit the values.yaml file and update the details according to your environment.

helm show values ako/ako --version 1.5.2 > values.yaml - Install AKO

helm install ako/ako --generate-name --version 1.5.2 -f /path/to/values.yaml --set ControllerSettings.controllerHost=<controller IP or Hostname> --set avicredentials.username=<avi-ctrl-username> --set avicredentials.password=<avi-ctrl-password> --namespace=avi-system - Verify the installation

helm list -n avi-system NAME NAMESPACE ako-1593523840 avi-system

Note: Only Helm version 3.0 is supported.

Installing AKO Offline Using Helm

Pre-requisites for Installation

Ensure the following prerequisites are met:

- The Docker image is downloaded from the My VMware portal.

Note: Log in to the portal using your My VMware credentials. - A private container registry to upload the AKO Docker image

- Helm version 3.0 or higher installed

Installing AKO

To install AKO offline using Helm,

- Extract the .tar file to get the AKO installation directory with the helm and docker images.

tar -zxvf ako_cpr_sample.tar.gz ako/ ako/install_docs.txt ako/ako-1.5.2-docker.tar.gz ako/ako-1.5.2-helm.tgz -

Change the working directory to this path:

cd ako/. - Load the docker image in one of your machines.

sudo docker load < ako-1.5.2-docker.tar.gz -

Push the docker image to your private registry. For more information, click here.

-

Extract the AKO Helm package. This will create a sub-directory ako/ako which contains the Helm charts for AKO (ako/chart.yaml crds templates values.yaml).

-

Update the helm values.yaml with the required AKO configuration (Controller IP/credentials, docker registry information etc).

- Create the namespace

avi-systemon the OpenShift/Kubernetes cluster.kubectl create namespace avi-system - Install AKO using the updated helm charts.

helm install ./ako --generate-name --namespace=avi-system

Upgrade AKO

AKO is stateless in nature. It can be rebooted/re-created without impacting the state of the existing objects in Avi if there’s no requirement of an update to them.

AKO will be upgraded using Helm.

During the upgrade process a new docker image will be pulled from the configured repository and the AKO pod will be restarted.

On restarting, the AKO pod will re-evaluate the checksums of the existing Avi objects with the REST layer’s intended object checksums and do the necessary updates.

Notes for Upgrading to AKO 1.5.1

Refer to this section before upgrading from any version of AKO to AKO version 1.5.1 and higher:

-

Refactored VIP Network Inputs AKO version 1.5.1 deprecates

subnetIPandsubnetPrefixin the values.yaml (used during Helm installation), and allows specifying the information via thecidrfield withinvipNetworkList. -

The existing

AviInfraSettingCRDs need an explicit update after applying the updated CRD schema yaml. To update existingAviInfraSettingswhile performing a Helm upgrade,-

Ensure that the

AviInfraSettingCRDs have the required configuration. If not save it in the yaml files. -

Upgrade the CRDs to provide

vipNetworksin the new format. -

Updating the CRD schema would remove the currently invalid

spec.networkconfiguration in existingAviInfraSettings. Update theAviInfraSettingsto follow the new schema as shown above and apply the changed yaml. -

Proceed with Step 2 of upgrading AKO.

-

To upgrade AKO using the Helm repository,

- Run this command to update local AKO chart information from the chart repository:

helm repo update - Helm does not upgrade the CRDs during a release upgrade. Before you upgrade a release, run the following command to upgrade the CRDs:

helm template ako/ako --version 1.5.2 --include-crds --output-dir</code></pre> - This will save the helm files to an output directory which will contain the CRDS corresponding to the AKO version. Install CRDs using:

kubectl apply -f <output_dir>/ako/crds/ - The release is listed as shown below:

helm list -n avi-system NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION ako-1593523840 avi-system 1 2020-09-16 13:44:31.609195757 +0000 UTC deployed ako-1.3 1.3 - Update the helm repo URL:

helm repo add --force-update ako https://projects.registry.vmware.com/chartrepo/ako "ako" has been added to your repositoriesNote: The charts repo is migrated to VMWare’s harbor repository and hence a force update of the repo URL is required for a successful upgrade process from 1.3.1.

- Get the values.yaml for the latest AKO version:

helm show values ako/ako --version 1.5.2 > values.yaml -

Edit the values.yaml file and update the details according to your environment. You can copy the values from the old values.yaml file used for currently installed version.

- Upgrade the helm chart:

helm upgrade ako-1593523840 ako/ako -f /path/to/values.yaml --version 1.5.2 --set ControllerSettings.controllerHost=<IP or Hostname>--set avicredentials.password=<username> --set avicredentials.username=<username> --namespace=avi-system

Upgrading AKO Offline Using Helm

To upgrade AKO without using the online Helm repository,

-

Follow the steps 1 to 6 from Installing AKO Offline Using Helm.

-

Use the following command:

helm upgrade <release-name> ./ako -n avi-system

Delete AKO

- Edit the

configmapused for AKO and set thedeleteConfigflag to true if you want to delete the AKO created objects. Else skip to step 2.

kubectl edit configmap avi-k8s-config -n avi-system - Delete AKO using the command shown below:

helm delete $(helm list -n avi-system -q) -n avi-system

Note: Do not delete the configmap avi-k8s-config manually, unless you are doing a complete Helm uninstall. The AKO pod has to be rebooted if you delete and the avi-k8s-config configmap has to be reconfigured.

Parameters

The following table lists the configurable parameters of the AKO chart and their default values:

| Parameter | Description | Default Values |

|---|---|---|

| ControllerSettings.controllerVersion | Avi Controller version | 18.2.10 |

| ControllerSettings.controllerHost | Used to specify the Avi controller IP or Hostname | None |

| ControllerSettings.cloudName | Name of the cloud managed in Avi | Default-Cloud |

| ControllerSettings.tenantsPerCluster | Set to true if you want to map each Kubernetes cluster uniquely to a tenant in Avi | False |

| ControllerSettings.tenantName | Name of the tenant where all the AKO objects will be created in Avi. | Admin |

| L7Settings.shardVSSize | Displays shard VS size enum values: LARGE, MEDIUM, SMALL | LARGE |

| AKOSettings.fullSyncFrequency | Displays the full sync frequency | 1800 |

| L7Settings.defaultIngController | AKO is the default ingress controller | True |

| ControllerSettings.serviceEngineGroupName | Displays the name of the Service Engine Group | Default-Group |

| NetworkSettings.nodeNetworkList | Displays the list of networks and corresponding CIDR mappings for the Kubernetes nodes | None |

| AKOSettings.clusterName | Unique identifier for the running AKO instance. AKO identifies objects it created on Avi Controller using this parameter | Required |

| NetworkSettings.subnetIP | Subnet IP of the data network | Deprecated |

| NetworkSettings.subnetPrefix | Subnet Prefix of the data network | Deprecated |

| NetworkSettings.vipNetworkList | Displays the list of Network Names and Subnet information for VIP network, multiple networks allowed only for AWS Cloud | Required |

| NetworkSettings.enableRHI | Publish route information to BGP peers | False |

| NetworkSettings.bgpPeerLabels | Select BGP peers using bgpPeerLabels, for selective virtual service VIP advertisement. | Empty List |

| NetworkSettings.nsxtT1LR | Specify the T1 router for data backend network, applicable only for NSX-T based deployments | Empty String |

| L4Settings.defaultDomain | Used to specify a default sub-domain for L4 LB services | First domainname found in cloud's DNS profile |

| L4Settings.autoFQDN | Specify the layer 4 FQDN format | default |

| L7Settings.noPGForSNI | Skip using Pool Groups for SNI children | False |

| L7Settings.l7ShardingScheme | Sharding scheme enum values: hostname, namespace | hostname |

| AKOSettings.cniPlugin | The CNI plugin being used in a Kubernetes cluster. Specify one of: calico, canal, flannel | This field is required for calico setups |

| AKOSettings.logLevel | logLevel values: INFO, DEBUG, WARN, ERROR. logLevel can be changed dynamically from the configmap | INFO |

| AKOSettings.deleteConfig | Set to true if user wants to delete AKO created objects from Avi. The deleteConfig can be changed dynamically from the configmap | False |

| AKOSettings.disableStaticRouteSync | Disables static route syncing if set to true | False |

| AKOSettings.apiServerPort | Internal port for AKO's API server for the liveness probe of the AKO pod | 8080 |

| AKOSettings.layer7Only | Operate AKO as a pure layer 7 ingress Controller | False |

| avicredentials.username | Enter the Avi Controller username | None |

| avicredentials.password | Enter the Avi Controller password | None |

| image.repository | Specify docker-registry that has the AKO image | avinetworks/ako |

Note:

-

Starting with AKO version 1.5.1, the fields

subnetIPandsubnetPrefixare deprecated. -

The field

vipNetworkListis mandatory. This is used for allocating VirtualService IP by IPAM Provider module. -

Each AKO instance mapped to a given Avi cloud should have a unique clusterName parameter. This would maintain the uniqueness of object naming across Kubernetes clusters.

Document Revision History

| Date | Change Summary |

|---|---|

| October 06, 2021 | Updated the installation and upgrade steps for AKO version 1.5.2 |

| August 31, 2021 | Updated the installation and upgrade steps for AKO version 1.5.1 |

| July 29, 2021 | Updated the installation and upgrade steps for AKO version 1.4.3 |

| May 11, 2021 | Updated the installation and upgrade steps for AKO version 1.4.2 |

| April 20, 2021 | Updated the installation and upgrade steps for AKO version 1.4.1 |

| February 12, 2021 | Updated the installation and upgrade steps for AKO version 1.3.3 |

| Decemeber 18, 2020 | Updated the step to upgrade CRDs during AKO upgrade(version 1.3) |

| November 23, 2020 | Updated the Upgrade Procedure for AKO version 1.2.1 to 1.2.3 |

| September 16, 2020 | Published the Installation Guide for AKO version 1.2.1 |

| July 20, 2020 | Published the Installation Guide for AKO version 1.2.1 (Tech Preview) |